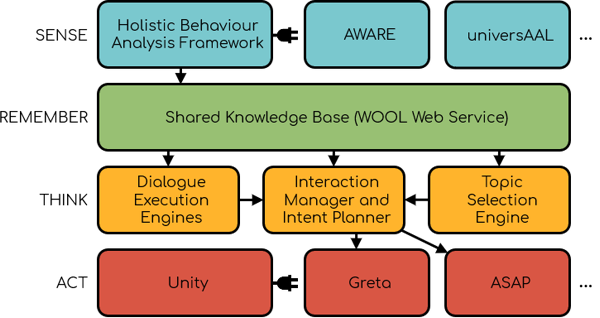

The Agents United Platform follows a layered architecture, with different modules performing specialized roles in each layer. Most modules are interchangeable by new implementations, since they communicate with each other through ActiveMQ or a REST APIs. You can find further documentation about the platform in our Github, in particular the architecture repository, demonstrator repository and the tutorials section on the wiki and also in the public deliverables of the Council of Coaches project.

Some of the modules were developed from the ground up during the Council of Coaches project, and are hosted in the Agents United Github repositories. Other modules are externally hosted and integrated into Agents United by their respective owners, who are members of the Agents United Alliance.

SENSE

This layer takes care of gathering data from the environment and interpreting it so it can later be used in dialogues by the embodied conversational agents.

- Holistic Behaviour Analysis Framework: This module gathers data from different sensor technology plugins and performs long-term and short-term analysis to detect behaviour changes spanning from days to months. Some submodule plugins are available, which connect to specific sensor technologies, such as the AWARE server, or the universAAL IoT platform.

REMEMBER

This layer is in charge of storing and delivering the data to be used by the agents, not only coming from the sensors, but also from the feedback gathered during dialogues with the user.

- The Shared Knowledge Base module centralizes the task of storing datra, defines the format of that data and acts as a general database of the system.

THINK

This is where the dialogues between the agents and the user are selected, refined and take shape, before sending them to the virtual agents to be played out.

- The Dialogue and Argumentation Framework interprets and executes dialogue games expressed in the Dialogue Game Description Language (DGDL). This provides a structure to dialogues between agents and users, determining possible moves. Moves are later filtered and filled according to data from the user or agent personality.

- The Intent Planner translates the high-level dialogue moves from the Dialogue and Argumentation Framework to more low-level group behaviours for individual agents. It also interprets the user moves and sends feedback to the Shared Knowledge Base.

- The Topic Selection Engine can be used to automatically make decisions on which conversational topic to initiate with the user of the system. It can make decisions based on the information collected in the REMEMBER layer.

ACT

Here is where the user interacts with the system through a 3D representation of agents rendered in Unity. The agents can be represented by two different systems, Greta or ASAP, which showcases the modularity of the platform. These take care of interpreting the dialogue and animating and voicing the virtual agents accordingly, based on Behaviour Markup Language (BML). Both types of agents can be included in the same scene and interact seemlessly with each other.

- Greta is a system for building socio-emotional virtual characters for agents, with automated gestural and group behavior.

- ASAP (Articulated Social Agents Platform) provides multimodal verbal and nonverbal behavior for virtual agents, allowing a fluent human-machine interaction.